How I Built an AI Sales Agent for My Directory in One Day With Claude Code

I run TapWaterData.com. It's a directory of 18,700 water utilities across the US -- every one of them has verified contacts, service areas, EPA compliance data. I've been running it for two years.

Companies that sell to water utilities -- pipes, chemicals, pumps, monitoring software -- they buy this kind of data from ZoomInfo and Apollo for thousands a year. Same data I have.

And yeah, I get inbound sales. Someone Googles "water utility contacts," finds my site, reaches out. It works. But it's unpredictable. One month I'll close a few deals. Next month, crickets. I can't control when someone decides to search.

I kept staring at this gap. I have 18,700 verified utility contacts. There are hundreds of companies who'd pay for that data. But I was just... waiting for them to find me.

After a particularly quiet month, I decided to stop waiting.

I opened Claude Code, described what I wanted, and it built the entire system in a single session. An AI sales agent -- 8,800 lines of code, 39 files -- that runs 24/7 on my Mac Mini. It finds companies that sell to water utilities, researches them, and writes personalized outreach using data only my directory has.

I didn't write the code. I wrote a spec. Claude Code did the rest.

Seven days later: 665 companies discovered. 306 personalized emails drafted. 60% open rate on sends. Total cost: $27.

Let me walk you through exactly what happened.

665

Companies Discovered

60%

Email Open Rate

$27

Weekly Cost

8,800

Lines of Code

The Realization That Changed Everything

Here's what most directory monetization advice sounds like: sell featured listings, run display ads, charge for premium access.

I tried thinking about it differently.

My directory isn't just a website. It's 18,700 rows of data that sales teams at water industry vendors spend weeks building manually. Who runs procurement at each utility? How big is their service area? Any EPA violations? What conferences do their vendors go to?

When I send a cold email, I can reference all of that. A generic sales email says "we help companies like yours." Mine says "you just expanded into Texas -- I have procurement contacts for 1,142 Texas utilities."

That's not a cold email anymore. That's a warm intro backed by data nobody else has.

So I stopped thinking of TapWaterData as a website people visit. I started thinking of it as an unfair advantage in outbound sales.

Before: Directory = Website

- Wait for inbound traffic

- Monetize with ads/listings

- Growth = more SEO

- Moat = traffic

After: Directory = Data Asset

- Go find the buyers

- Monetize with outbound

- Growth = more outreach

- Moat = data nobody else has

I Didn't Build It. I Described It.

This is the part that might surprise you.

I'm not a backend engineer. I didn't sit down and write 8,800 lines of TypeScript. I opened Claude Code -- Anthropic's coding agent -- and described what I wanted.

My spec was basically this: "I have a directory of 18,700 water utilities. Build me an agent that runs on a loop, finds companies selling to these utilities, classifies them, finds the right contact person, researches the company for timing signals, and writes a personalized cold email using my directory data as the value prop."

One session. A few hours. Claude Code built the whole thing. Database schema, API clients, scraping logic, LLM classification, email drafting, SendGrid integration, a React dashboard for reviewing outreach, and a PM2 config to run it 24/7.

I reviewed the output, tested it, and hit start.

I know that sounds too easy. And honestly, it kind of was. The hard part wasn't the code. It was knowing what to ask for. The spec -- the clear description of what each stage should do, what data it should use, what the output should look like -- that's where the real work happened.

What I Did

- Wrote the spec/PRD

- Defined the ICP criteria

- Chose the APIs

- Reviewed the output

- Tested and approved emails

Time: ~2 hours thinking

What Claude Code Built

- 39 source files

- 8,800 lines of TypeScript

- Database schema (8 tables)

- API server + endpoints

- React dashboard

- Agent loop orchestrator

- Email tracking system

- PM2 config for 24/7

Time: ~1 session

How It Works: Following One Company Through

Let me make this concrete. Here's what happens to a single company as it flows through the pipeline.

AquaPure Corp shows up on the AWWA conference exhibitor list. (Composite example, but this is exactly how the real pipeline works.)

Pipeline: One Company's Journey

1. Find

Scrape AWWA exhibitor list → new company added to database

2. Classify

Claude reads website → "Tier A, sells water treatment chemicals, national sales team" — Score: 72/100

3. Enrich

Look up domain → find Randy Draper, VP Business Development. Email verified.

4. Research

Scan for timing signals → just won Texas contract, posted 3 sales jobs. Growing fast.

5. Write

Claude drafts personalized email using dossier + directory data as the value prop.

6. Send & Track

Review, approve, SendGrid delivers. Opened 2 hours later.

Total time per company: ~2 minutes | Loop: every hour | Orchestrator: 147 lines

The orchestrator -- the code that runs the loop -- is 147 lines. Each step is one function. They chain together in a loop that runs every hour.

The Review Queue: Every Email Gets Human Approval

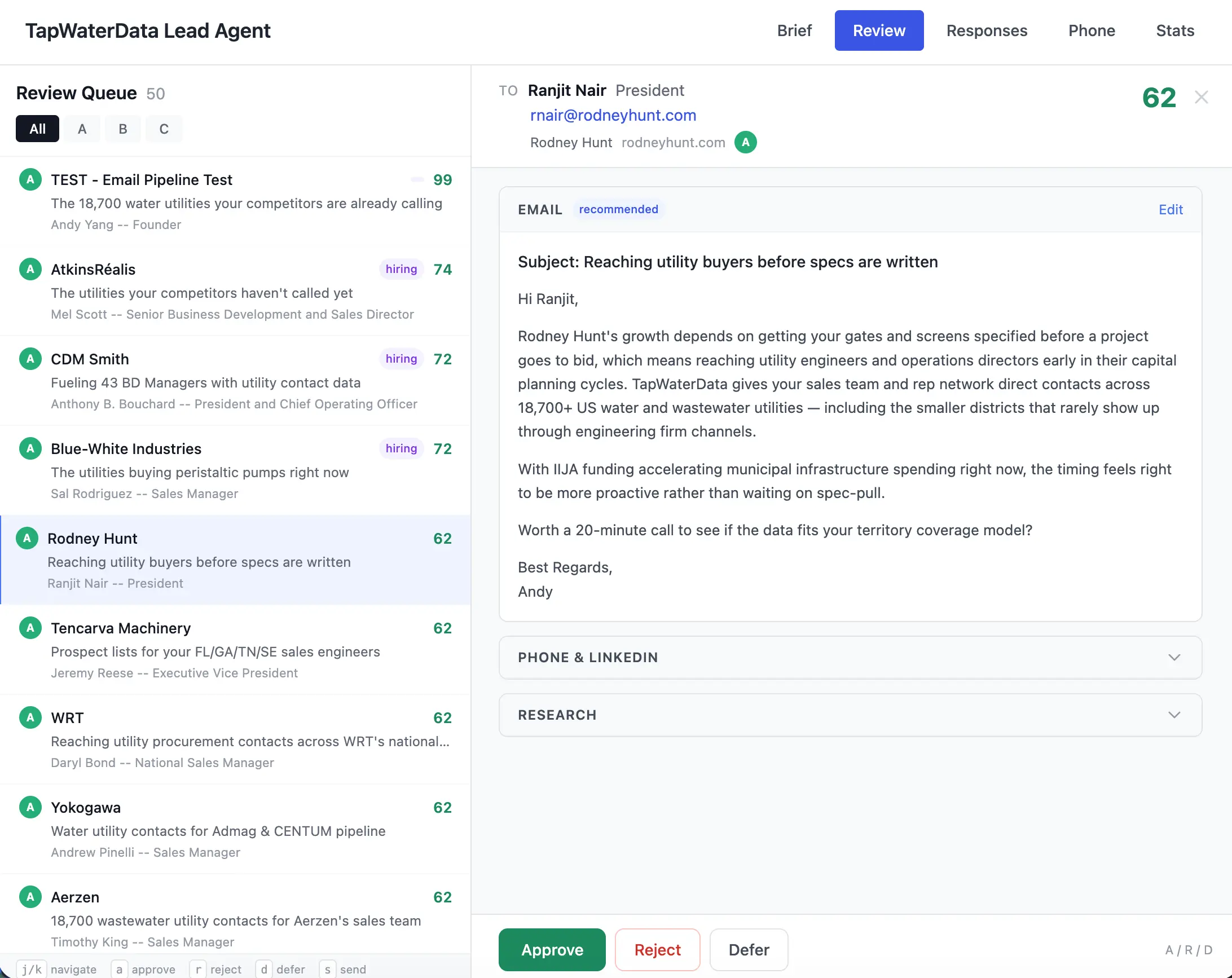

The dashboard shows every draft with the recipient, company, score, and full email preview. I approve, reject, or defer each one. Keyboard shortcuts (j/k/a/r/d/s) make it fast to review dozens at a time.

Why the Emails Actually Work

Here's what I want to be honest about: the AI isn't the secret sauce. The data is.

Claude does two things in this system. It classifies companies ("do they sell to water utilities? A, B, or C tier?") and it writes emails. That's it. Everything else is normal code -- HTTP requests, database queries, API calls.

But here's why the emails get 60% opens instead of the industry average of 21%.

Before writing anything, the agent builds a dossier. Seven questions about the company:

- What do they sell?

- How do they sell it? Direct, distributors, reps?

- What territory?

- Growing? Hiring? New contracts?

- What's their pain point in reaching utilities?

- What data from MY directory helps them most?

- What's the angle?

Then Claude writes the email using that dossier plus my 18,700 utility contacts as the offer.

Anyone can ask ChatGPT to write a cold email. Nobody else can say "I have procurement contacts for 1,142 Texas water utilities." That's the difference. The AI is the pen. Your directory data is the ink.

Generic Cold Email

21% average open rate

Hi Randy,

I noticed your company is in the water treatment space. We help companies like yours reach new customers.

Would you be open to a quick chat?

Best,

[Sales Guy]

Directory-Powered Email

60% open rate

Hey Randy,

Noticed AquaPure just expanded into Texas and you're hiring BDMs.

I run TapWaterData — we have verified procurement contacts for 1,142 Texas utilities.

Happy to show you the data if useful for ramp-up.

Want the Full Spec?

I'm sharing the exact product spec I gave Claude Code to build this system. ICP definition, pipeline stages, data model, everything.

Subscribe to Get the Spec

7 Days of Real Numbers

I let it run for a week. Here's everything -- good and bad.

665

Companies Discovered

417

A-Tier Classified

306

Outreach Drafts

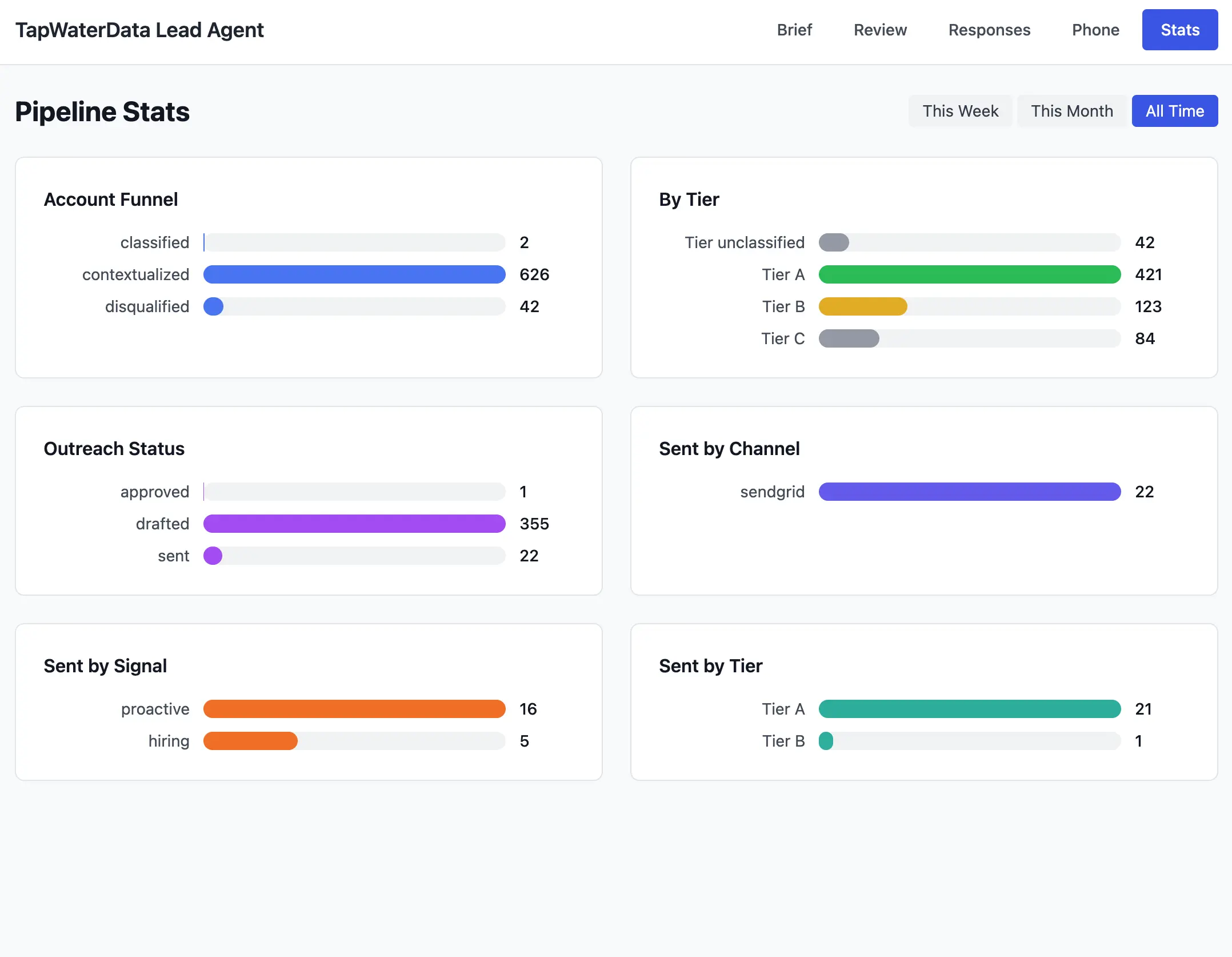

Pipeline Stats Dashboard

The dashboard tracks every stage: account funnel, tier classification, outreach status, send channel, and signals. All data updates in real time as the pipeline runs.

Email Performance

- Open rate: 60% (vs 21% industry average)

- Bounce rate: 0%

- Total sends: 22 (manually approved)

- One email opened 3x (someone's interested)

Outreach Tracking: Real Companies, Real Opens

Real companies, real opens. Each email was personalized using directory data these companies can't get anywhere else.

Some context on those numbers. A human doing this work -- finding 665 qualified companies, looking up contacts, writing personalized emails for each -- that's about 3 months full-time. ZoomInfo would charge $500-2,000/month for comparable data. I spent $27 and used a Mac Mini that was already sitting on my desk.

Total Weekly Cost: $10-27

Cost Breakdown:

- Firecrawl (scraping): ~$3/wk

- Anymail (email finding): ~$8-24

- Claude (AI): $0 (subscription covers it)

- SendGrid (sending): $0 (free tier)

- Server: $0 (Mac Mini already owned)

A human doing this — finding 665 qualified companies, writing personalized emails — would take ~3 months full-time. ZoomInfo charges $500-2,000/month for comparable data.

What Broke

I'm including this because every "how I built X" post that only shows wins is lying.

What Broke (Keeping It Real)

- Firecrawl credits ran out on day 2. Starter plan. Didn't realize how fast I'd burn through scraping credits. Pipeline stalled for 3 days before I even noticed. Lesson: set up billing alerts.

- Mac Mini went to sleep. Corrupted the SQLite database. Crashed the enrichment pipeline for 3 days. Fixed it by turning off sleep mode. Felt dumb.

- Timing signals never worked. The research stage that checks for hiring and expansion news — it depends on web scraping, and between the credit issue and the sleep issue, it failed every single cycle. The outreach still worked, just without the timing context.

- Only sent 22 of 326 drafts. The bottleneck was me. I was manually reviewing every email because I was nervous about a new system. The agent was ready to send hundreds. I was the slow part.

The core works. Find, classify, enrich, write, send, track. 60% open rate says the approach is right. Infrastructure just needs hardening.

The Tech Stack (For the Builders)

I went with the simplest thing at every decision point. This is a solo operation, not a startup.

| What | Choice | Why |

|---|---|---|

| Runtime | Bun | Runs TypeScript directly. Built-in SQLite. No compile step. |

| Database | SQLite, WAL mode | One file. No Postgres, no connection strings, no ops. |

| HTTP | Hono | 50 lines for a full API. TypeScript-native. |

| AI | Claude Sonnet | Best at classification + writing natural emails. |

| Email finding | Anymail | Cheap. Simple API. |

| Email sending | SendGrid | Free tier. Open/click tracking included. |

| Scraping | Firecrawl | Search + scrape in one call. Returns markdown. |

| Process mgr | PM2 | One command for 24/7. Auto-restarts. Survives reboot. |

| Frontend | React + Tailwind | Review queue dashboard. Approve/reject drafts. |

Codebase Stats

Source Files

39

Lines of Code

8,800

Orchestrator

147 lines

Database

1 file

Cloud Services

0

Monthly Hosting

$0

Your Directory Can Do This Too

I keep coming back to this: the hard part wasn't building the agent. Claude Code handled that. The hard part was the spec -- knowing what to ask for.

And the spec comes from answering four questions about your directory:

1. Who would pay to reach your audience?

2. What data do you have that they don't?

3. How does that data help them specifically?

4. What does the pipeline look like? (Find, classify, enrich, research, write, send -- same structure, different data.)

Job Board

Who pays: recruiting agencies, HR software vendors

Your data: who's hiring, how fast, what roles

"You're hiring 12 engineers this quarter. Our platform reaches 50K candidates in your area."

SaaS Directory

Who pays: dev tool vendors, marketing agencies, VCs

Your data: launches, traction, tech stacks

"Your competitor launched on our directory and got 2K visitors. Want the same exposure?"

Local Business Directory

Who pays: marketing agencies, POS vendors, insurers

Your data: new openings, closures, seasonal patterns

"47 restaurants opened in Austin last quarter. None have a website yet."

Get the Spec

Here's what I promised. The exact product spec I gave Claude Code to build this system. The ICP definition, the pipeline stages, the data model, everything.

If you run a directory and want to build your own version of this, the spec is the starting point. Adapt it for your niche, your data, your ICP. Then hand it to Claude Code.

Get the Full Spec

The exact product spec I gave Claude Code. ICP definition, pipeline stages, data model, everything. Adapt it for your niche, your data, your ICP.

Subscribe to Get the Spec

What's Next

This is week one. I'm tracking what happens next:

- Scaling sends. I've been the bottleneck. Time to batch-approve Tier A drafts.

- Fixing infrastructure. No more sleep mode. Firecrawl alerts. Monitoring.

- Tracking conversions. Replies, meetings, actual deals over 30 days.

- LinkedIn outreach. The system already generates LinkedIn messages. Adding delivery.

The real question isn't whether the agent can find prospects and write emails. It can. The question is whether directory-powered outbound converts better than generic tools.

I think it does. Because nobody else has my data.

I'll share the results as they come in.

-- Andy